Table of Contents

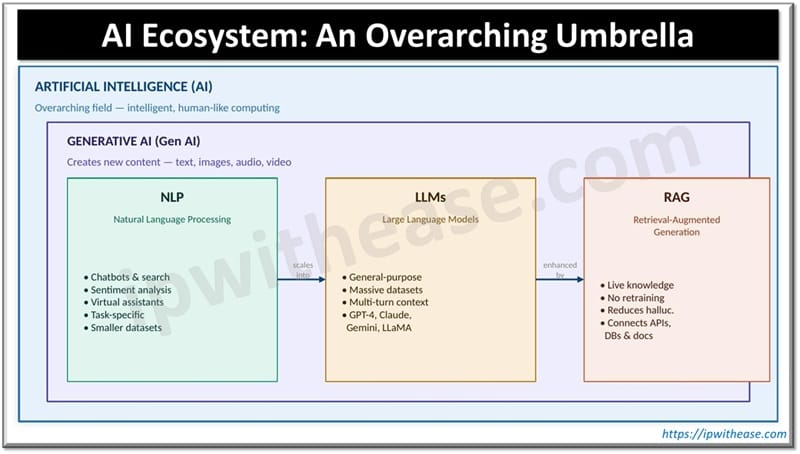

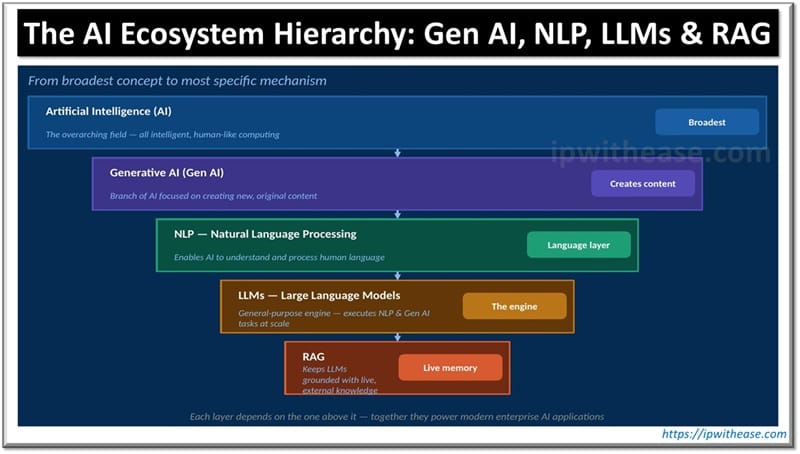

The AI ecosystem is not a single technology but a layered, interdependent set of disciplines and tools — each solving a distinct problem and contributing to the capabilities of the next layer above it.

- AI provides the overarching framework for building intelligent systems.

- Generative AI enables systems to create new, original content from learned patterns.

- NLP gives AI the ability to understand and process human language, the primary interface between users and intelligent systems.

- LLMs overcome the limitations of traditional NLP by operating at massive scale, performing multiple language tasks simultaneously, and maintaining conversational context.

- RAG solves the LLM knowledge problem keeping AI responses accurate, current, and grounded in real-world information without the cost of full model retraining.

For enterprise IT teams, understanding this hierarchy is the first step toward evaluating, designing, and deploying AI solutions that are not just technically impressive but genuinely reliable, maintainable, and fit for production use.

This blog post breaks down the AI ecosystem from the ground up; starting with the overarching umbrella of Artificial Intelligence, drilling down into Generative AI, NLP, LLMs, and finally RAG — explaining each component’s role, limitations, and how they work together to power modern AI applications.

AI Ecosystem: An Overarching Umbrella

Artificial Intelligence (AI) is the foundational field of computer science focused on building systems that can perform tasks that typically require human intelligence. These tasks include:

- Problem solving and logical reasoning

- Learning from data and adapting over time

- Decision making under uncertainty

- Understanding and generating human language

- Visual perception and image recognition

Think of AI as a large umbrella under which multiple specialized disciplines exist. Generative AI, NLP, LLMs, and RAG are all subsets of or tools within this broader AI universe — each serving a distinct but complementary role.

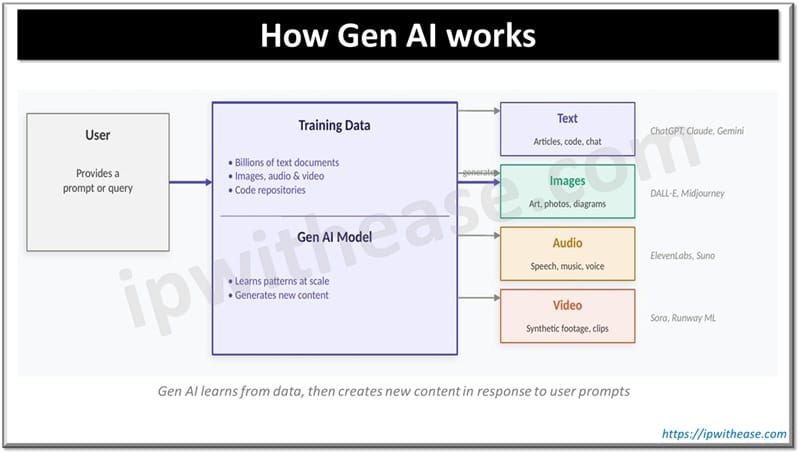

What is Generative AI (Gen AI)?

Gen AI is a branch of artificial intelligence that focuses on creating new and original content rather than simply classifying, predicting, or retrieving existing content. Gen AI systems can generate:

- Text: articles, summaries, code, email drafts, documentation

- Images: photorealistic visuals, artwork, diagrams

- Audio: speech synthesis, music generation, voice cloning

- Video: synthetic video content, animations, deepfakes

Gen AI accomplishes this by learning from large-scale training datasets. The model is exposed to enormous quantities of human-created content (billions of text documents, images, audio samples) and learns the underlying patterns, structures, and relationships within that data. When prompted by a user, the model uses those learned patterns to generate new, contextually relevant output.

How Gen AI Differs from Traditional AI

| Parameter | Traditional AI | Generative AI |

|---|---|---|

| Primary goal | Classify, predict, or detect | Create new, original content |

| Output type | Label, score, or decision | Text, image, audio, video |

| Training data | Structured, task-specific datasets | Massive, diverse unstructured data |

| Flexibility | Task-specific | General-purpose across many tasks |

| Examples | Spam filter, fraud detection | ChatGPT, DALL-E, Gemini, Claude |

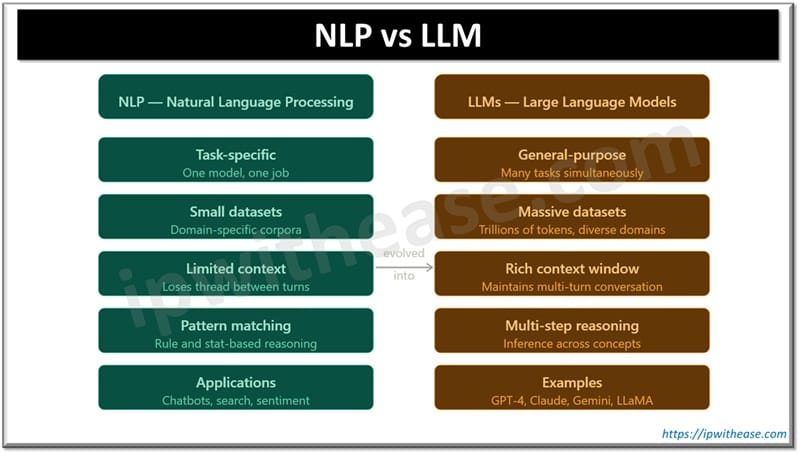

What is Natural Language Processing (NLP)?

NLP is the branch of AI that enables machines to understand, interpret, and respond to human language; both written and spoken. It is the technology that makes it possible for a computer to read a sentence, extract meaning from it, and formulate a relevant response.

Capabilities of NLP

- Text classification: Categorizing documents, emails, or messages by topic or intent

- Sentiment analysis: Detecting whether a piece of text expresses positive, negative, or neutral sentiment

- Intent recognition: Identifying what a user is trying to accomplish with their input

- Named entity recognition (NER): Extracting people, organizations, places, and dates from text

- Machine translation: Translating text between languages

- Speech-to-text and text-to-speech: Converting between spoken and written language

NLP is the foundation of many familiar enterprise tools — chatbots, virtual assistants, search engines, and automated customer support systems all rely on NLP to function.

Limitations of NLP

- Task-specific by design: Most NLP models are built and trained for a single, specific task (e.g., sentiment analysis OR translation — not both simultaneously).

- Smaller training datasets: NLP models are typically trained on comparatively modest, domain-specific datasets, limiting their breadth of knowledge.

- Poor conversational context: Traditional NLP models struggle to maintain coherent context across a multi-turn conversation — each exchange is processed somewhat independently.

- Limited scalability: As query complexity increases, NLP models often fail to generalize beyond their training distribution.

What is a Large Language Model (LLM)?

LLMs are a powerful subset of NLP that have been trained at massive scale — on trillions of tokens of text data — to perform a wide variety of language tasks simultaneously. Rather than being purpose-built for a single function, LLMs are general-purpose language engines.

The term ‘large’ in LLM refers to two things: the scale of the training data and the number of parameters in the model. GPT-4, for example, is estimated to have hundreds of billions of parameters — mathematical weights learned during training that encode the model’s understanding of language, facts, and reasoning.

How LLMs Extend and Overcome NLP Limitations

| Challenge | NLP Alone | LLM + NLP |

|---|---|---|

| Task versatility | Single task only | Handles many tasks simultaneously |

| Conversational context | Loses context between turns | Maintains multi-turn context fluently |

| Generalisation | Fails on unseen input types | Generalises across domains |

| Reasoning ability | Limited to pattern matching | Multi-step reasoning and inference |

| Training scale | Small, domain-specific data | Trillions of tokens, diverse domains |

LLMs in Practice

LLMs power today’s most prominent AI applications: ChatGPT, Google Gemini, Anthropic Claude, and Meta LLaMA are all LLM-based systems. In enterprise environments, LLMs are being used to power:

- Intelligent chatbots and virtual assistants for customer service and IT helpdesks

- Automated document summarisation and contract analysis

- Code generation, code review, and developer productivity tools

- Internal knowledge base Q&A systems

- Automated report generation and business intelligence narration

Limitations of LLMs

However, LLMs have a critical limitation: they are trained on static datasets with a fixed knowledge cutoff date. Once training is complete, the model’s knowledge is frozen. It cannot learn from new events, updated regulations, or revised company policies on its own.

Imagine an LLM trained on data up to early 2024. If your organization updates its security policy in late 2024, or a critical CVE is published, the LLM will be unaware of it — and may confidently provide outdated or incorrect guidance. This knowledge gap is where RAG becomes essential.

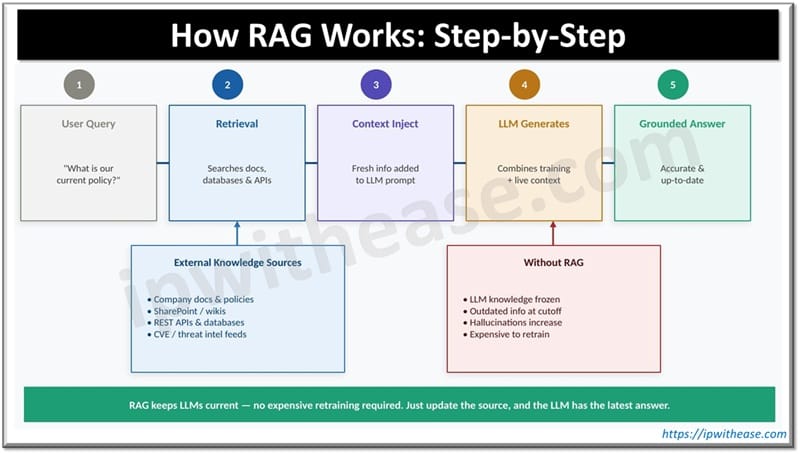

What is RAG (Retrieval-Augmented Generation)?

RAG is an architectural pattern that enhances LLMs by connecting them to external, up-to-date knowledge sources at query time. Rather than relying solely on what the LLM learned during training, RAG retrieves the most current and relevant information from external databases, documents, or APIs — and injects that information into the LLM’s context before generating a response.

How RAG Works: Step by Step

- User submits a query: A user asks the AI a question, for example, ‘What is our current remote access policy?’

- Retrieval phase: The RAG system searches external sources — internal document repositories, SharePoint, wikis, databases, or APIs — for content relevant to the query.

- Context injection: The retrieved, up-to-date content is passed to the LLM as additional context alongside the original query.

- LLM generates response: The LLM uses both its trained knowledge and the freshly retrieved information to formulate an accurate, grounded response.

- Bias and hallucination correction: If the LLM’s trained response conflicts with the retrieved data, the RAG system can flag the discrepancy and steer the model towards the current, verified information.

Why RAG is Economically and Operationally Superior

The alternative to RAG would be to retrain or fine-tune the LLM every time your organisation’s knowledge changes. This is:

- Prohibitively expensive: Training a large model from scratch can cost millions of dollars in compute resources.

- Time-consuming: Full retraining cycles can take weeks or months.

- Wasteful: The vast majority of the model’s knowledge does not need updating — only a small subset is outdated.

RAG solves this elegantly: you simply update the external knowledge source (a document, a database, an API endpoint), and the LLM immediately has access to that updated information the next time it is queried — no retraining required.

Enterprise Use Cases for RAG

- Policy and compliance Q&A: Employees can query the latest HR policies, security guidelines, or regulatory frameworks — always getting current answers.

- IT service management: Helpdesk bots powered by RAG can answer questions about the latest network configurations, known issues, and change management records.

- Security operations: SOC teams can query the latest threat intelligence feeds, CVE databases, and incident response playbooks via a RAG-enhanced LLM.

- Legal and contract management: Legal teams can query the latest contract templates, regulatory updates, and jurisdiction-specific guidance.

- Product documentation: Support teams receive answers grounded in the most recent product release notes and technical documentation.

How They All Connect: The AI Ecosystem Hierarchy

Understanding the relationship between these four components is best visualized as a layered ecosystem:

| Layer | Component | Role in the Ecosystem |

|---|---|---|

| 1 (Broadest) | Artificial Intelligence (AI) | The overarching field — all intelligent, human-like computing behaviour |

| 2 | Generative AI (Gen AI) | The branch of AI focused on creating new content from learned patterns |

| 3 | NLP | The technology enabling AI to process and understand human language |

| 4 | LLMs | The large-scale, general-purpose models that execute NLP and Gen AI tasks |

| 5 (Narrowest) | RAG | The mechanism that keeps LLMs grounded in current, accurate information |

In a modern enterprise AI application, all four layers work together in concert:

- The application is built on an AI foundation, defining the scope of intelligent behaviour required.

- Generative AI capabilities power the creative and language-generation aspects of the system.

- NLP processes user inputs — parsing queries, recognising intent, and structuring the request.

- The LLM performs the heavy lifting — reasoning, generating responses, maintaining conversational context.

- RAG ensures the LLM’s responses are grounded in the latest available information from trusted sources.

Think of AI as the building, Gen AI as the floor it occupies, NLP as the communication system, LLMs as the engine room, and RAG as the live news feed keeping the engine informed.Common Misconceptions

“NLP and LLMs are the same thing”

They are not. NLP is a broad discipline; LLMs are a specific, large-scale implementation approach within that discipline. All LLMs perform NLP tasks, but not all NLP systems are LLMs. Traditional NLP models are far smaller, task-specific, and do not share the generative, conversational capabilities of LLMs.

“RAG replaces the LLM”

RAG does not replace the LLM — it enhances it. The LLM remains the reasoning and generation engine. RAG simply equips that engine with access to current, domain-specific information that was not available during training. They are complementary, not competing.

“Gen AI is just about chatbots”

Generative AI is a much broader category than conversational AI. It encompasses image generation (DALL-E, Midjourney), code synthesis (GitHub Copilot), video generation (Sora), audio synthesis (ElevenLabs), and more. Chatbots powered by LLMs are one application of Gen AI — but far from the only one.

“LLMs always give accurate information”

LLMs can hallucinate — confidently generating factually incorrect information when they lack knowledge or encounter ambiguous queries. This is a known, documented limitation. RAG significantly reduces (but does not entirely eliminate) hallucination by grounding responses in verified source documents.

Watch Related Video

ABOUT THE AUTHOR

You can learn more about her on her linkedin profile – Rashmi Bhardwaj