Table of Contents

Cisco AI POD is a complete ready-to-deploy AI-ready infrastructure stack that includes compute, networking, storage, and AI software; all optimized to run AI workloads efficiently.

In simple terms, AI POD is a modular AI infrastructure block designed to run AI training and inference workloads. Think of an AI POD like a “building block” for AI data centers. Each AI POD includes – GPU servers, High-speed networking, Storage, AI software stack, Automation & management. Multiple AI PODs can be combined to scale from 100 GPUs → 1,000 GPUs → 10,000 GPUs → 100,000 GPUs.

To simplify: One AI POD = One Lego Block >> Multiple AI PODs = Large AI Data Center

AI workloads need a very high bandwidth, Low latency, GPU-to-GPU communication and Fast scaling. It is difficult for Traditional data center designs to handle AI workloads efficiently. To resolve this, AI PODS are created.

Cisco AI POD

A Cisco AI POD is Cisco’s reference architecture for building AI-ready infrastructure. Cisco provides validated design so companies can deploy AI infrastructure faster.

Cisco has introduced AI PODs as a comprehensive, modular infrastructure designed to streamline the implementation of artificial intelligence within the enterprise. By integrating high-performance computing, specialized NVIDIA GPUs, and optimized networking, this solution supports the entire AI lifecycle from initial model training to real-time deployment. These pre-validated systems aim to reduce deployment times significantly while ensuring that sensitive data remains secure and compliant.

The platform offers unified management through centralized dashboards, allowing IT teams to oversee complex workloads across on-premises and hybrid cloud environments. Ultimately, this architecture serves as a scalable foundation that helps organizations transition their AI projects from pilot phases to full-scale production with greater efficiency.

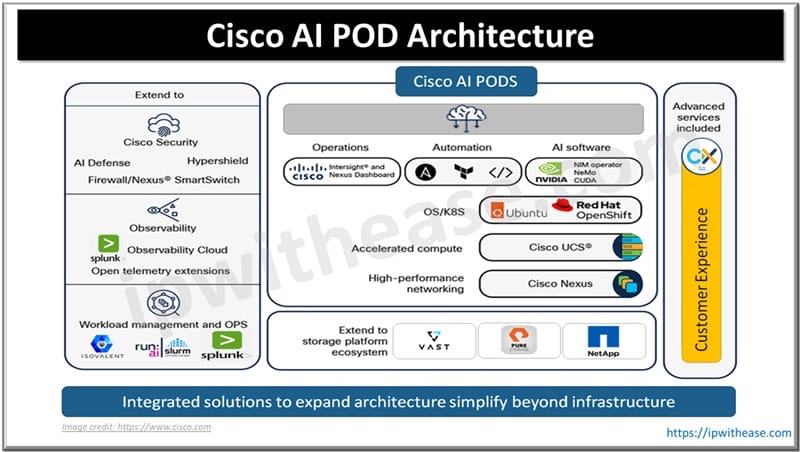

Cisco AI POD Architecture

Cisco AI PODs are built as pre-validated, full-stack solutions that include a specific set of hardware, software, and management tools designed to support the full AI lifecycle. Regardless of the specific configuration, they contain the following primary components:

Core Hardware

- Computing Servers: The solution utilizes Cisco UCS C845A (PCIE GPU), 885A (HGX & OAM) M8, and X-Series servers.

- Accelerators: These servers are powered by NVIDIA and AMD GPUs to handle compute-intensive training and inferencing workloads.

- Networking: Cisco Nexus 9000 Switches serve as the high-performance fabric for the POD, with smart switches used for demarcation and segmentation in modern data centers.

Software Stack and Development Tools

- AI Platforms: The ecosystem integrates NVIDIA AI Enterprise and RedHat OpenShift to provide a robust environment for building and deploying models.

- Frameworks: It supports leading software stacks, including PyTorch and TensorFlow, and provides robust APIs and tutorials for developers.

Management and Automation

- Centralized Management: Cisco Intersight provides SaaS-based management, which helps reduce infrastructure setup time.

- Automation: Deployment and operational tasks are streamlined using Ansible and Terraform automation.

Security and Observability Extensions

- Security Tools: The PODs include extensions for Cisco AI Defense and Hypershield to maintain data sovereignty and ensure enterprise-grade compliance.

- Observability: Integrated support for Splunk allows for comprehensive monitoring and observability of the AI infrastructure.

Services and Optional Components

- Professional Support: Cisco CX Services are included to provide a consolidated support model for the entire ecosystem.

- Optional Storage: While not required, the solution offers optional storage from partners like NetApp, Pure Storage, and VAST Data to assist data scientists with complex data management tasks

How do Cisco AI PODs simplify the transition from pilot to production?

Cisco AI PODs simplify the transition from pilot to production by providing a full-stack, modular infrastructure specifically designed to support every stage of the AI lifecycle.

They streamline this transition through several key features:

- Accelerated Deployment: By utilizing pre-validated designs, AI PODs can reduce infrastructure setup time by up to 50 percent, significantly speeding up the time to value for AI projects.

- Lifecycle Support: The architecture is built to handle the entire progression of an AI project, including training, fine-tuning, and high-throughput inferencing, ensuring the infrastructure used for a pilot can scale to meet production demands.

- Unified Management: Integration with Cisco Intersight and Nexus Dashboard provides unified management, automation, and operational visibility. This simplifies the task for IT and AI teams to manage the increased complexity of production environments.

- Deployment Flexibility: AI PODs support on-premises, hybrid, or cloud integration, allowing organizations to move their AI models from a controlled pilot environment to diverse production settings, such as the network edge or multitenant GPU cloud environments, with ease.

- Scalability and Security: The PODs are engineered to address common production hurdles like scaling AI workloads and ensuring data security and compliance, which are often more critical in a production phase than during a pilot.

ABOUT THE AUTHOR

You can learn more about her on her linkedin profile – Rashmi Bhardwaj