Table of Contents

As your enterprise systems expand across regions, services, and cloud environments, cloud latency can become a bigger problem than it first appears. What starts as a small delay in one part of the stack can quickly turn into slower applications, inconsistent user experiences, reduced productivity, and weaker overall reliability.

That is exactly why cloud latency optimization deserves more attention today. If you are managing global application delivery, performance is not just about infrastructure. It is also about how well your architecture, services, databases, and delivery practices work together.

In this blog, you will learn what causes cloud latency, how DevOps performance engineering helps you uncover the real blocks, and what you can do to reduce delays through smarter architecture, better delivery decisions, and stronger day-to-day operational practices.

What Cloud Latency Actually Means in Enterprise Environments

Cloud latency is the delay involved in sending a request across the network and receiving data back from a cloud-based service. In practical terms, it is one of the main reasons users may notice an application taking longer to load, return data, or complete an action.

In enterprise environments, that delay usually builds across multiple layers, including the network, the application, APIs, and the database. A small slowdown at each step can quickly add up, making the overall experience feel slower.

Cloud latency optimization is not just about fixing one slow component. In many enterprise systems, the real issue is the combined delay across the full request path. This is also why some enterprises look for end-to-end cloud engineering services that can improve performance across the stack rather than only at one layer.

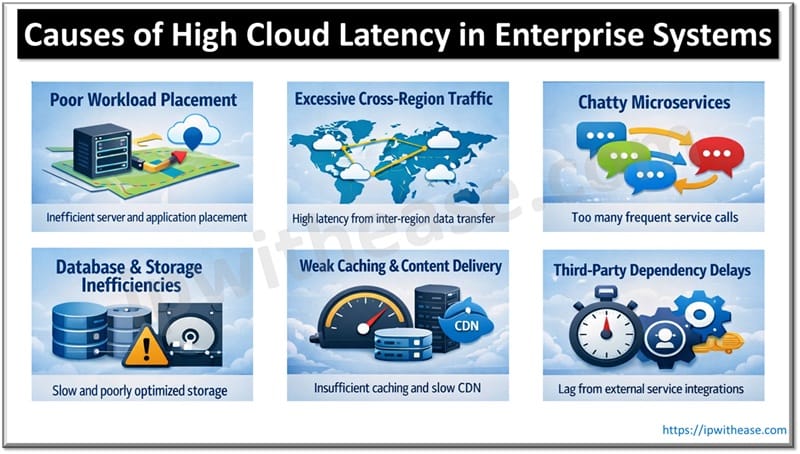

What Causes High Cloud Latency in Enterprise Systems

High cloud latency usually results from a mix of architecture choices, network distance, routing behavior, protocol overhead, and how distributed services and data stores are connected. In most cases, it is not caused by one big problem. It comes from a series of small delays across the system that add up over time. This becomes even more noticeable when businesses are managing global application delivery across different users, regions, and services.

Poor Workload Llacement

Latency often increases when workloads are placed too far from end users or the services they rely on. If the application, database, and other services are spread out without proper planning, response times become slower.

Excessive Cross-region Traffic

When data and service requests frequently move between cloud regions, delays increase. This is a common issue when optimizing multi-region cloud performance for enterprises with users in different locations.

Chatty Microservices

Microservices can increase flexibility, but they can also slow things down when a single request triggers too many internal service calls.

Database and Storage Inefficiencies

Slow queries, poor indexing, remote data access, and storage bottlenecks can all add extra delay.

Weak Caching and Content delivery

If caching is weak, systems need to fetch data from the source more often. That adds more time to each request.

Third-party Dependency delays

External APIs and third-party services can also slow response times, especially when their performance is inconsistent.

How DevOps Performance Engineering Identifies Latency Blocks

When latency starts affecting performance, guessing is not enough. You need to see exactly where requests are slowing down and which part of the system is causing it. That is where DevOps performance engineering plays a key role. It helps teams move from assumptions to clear, measurable insight.

By integrating performance checks into the delivery pipeline, businesses can shift from reactive troubleshooting to proactive optimization. This shift is a core part of how Cloud DevOps is transforming custom application development, as it builds monitoring and observability directly into the infrastructure.

To identify latency blocks, teams usually rely on:

- Distributed tracing

- APM

- Infrastructure monitoring

- Network monitoring

- Real user monitoring

- Synthetic testing

Together, these provide a clearer picture of whether the application, the network, the database, or an external service causes the delay.

It is also important not to rely only on average response time. Averages can appear healthy even when a smaller share of requests is significantly slower, affecting user experience. Metrics such as p95 and p99 latency are more useful for cloud latency optimization because they show the latency threshold within which 95% and 99% of requests are completed, helping teams identify tail-latency issues that averages often hide.

Key Metrics for Cloud Latency Optimization

These metrics help teams understand where latency is building up and how it affects users.

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Response Time | Total request completion time | Reflects user experience |

| P95/P99 Latency | Slowest requests | Reveals instability hidden by averages |

| Round-Trip Time (RTT) | Network delay | Helps identify routing or distance issues |

| Time to First Byte (TTFB) | Initial server responsiveness | Useful for web apps and APIs |

| Error Rate | Failed requests | Connects performance to reliability |

| Packet Loss/Jitter | Network instability | Important for global application delivery |

These metrics help teams understand where latency is building up and how it affects users.

How to Reduce Cloud Latency for Global Enterprises

Reducing cloud latency usually does not come down to one big fix. It comes from a series of practical decisions that improve performance across the stack. Strong cloud latency optimization starts with reducing distance, cutting unnecessary request paths, and making performance checks part of regular delivery.

1. Place workloads closer to users and dependent services

Latency often rises when applications, databases, and supporting services are spread too far apart. Keeping them closer to users and to each other helps reduce travel time and improves response speed.

2. Improve global application delivery with CDN and edge services

For enterprises serving users across locations, global application delivery has a direct impact on performance. CDNs and edge services can reduce latency by serving cacheable content and some edge-executed logic closer to users, while also reducing load on origin infrastructure.

3. Reduce service-to-service overhead

Some applications slow down because one request touches too many internal services. When teams simplify request flows and avoid unnecessary internal calls, the system becomes faster and easier to manage.

4. Optimize database and data-access performance

A slow database can hold up the entire application. Delays often come from inefficient queries, poor indexing, remote data access, or storage bottlenecks. Improving how data is stored and retrieved can remove a major source of latency.

5. Build stronger caching layers

Caching helps avoid repeated trips to the source system. Whether it is application caching, edge caching, or data caching, the goal is the same: return frequently needed data faster and reduce backend load.

6. Add performance validation to CI/CD

One of the most useful DevOps best practices for low-latency applications is to check performance before changes go live. Adding load tests, latency checks, and regression testing to CI/CD helps teams catch slowdowns early, rather than finding them in production.

Multi-Region Cloud Performance: Key Trade-Offs to Consider

A multi-region setup can help improve speed by placing applications and services closer to users. That makes it useful for optimizing multi-region cloud performance, especially for enterprises serving different geographies.

But it also comes with trade-offs. Teams often have to manage:

- Higher infrastructure and operating costs

- More complex data replication

- Failover challenges during outages

- Weaker visibility across regions

- Consistency trade-offs when data must stay in sync

This is why multi-region design should be treated as part of an enterprise cloud strategy, not just a technical upgrade. It can improve performance, but only when the business is also ready to handle the added cost, complexity, and operational overhead.

Common Mistakes That Undermine Cloud Latency Optimization

Some latency issues stay unresolved because teams focus on the wrong areas. Common mistakes include:

- Treating latency as only a network problem when the delay may also come from the application, APIs, or database

- Looking only at average response time, even though it can hide slower requests and a poor user experience

- Using a single-region mindset for global systems where users and services are spread across different locations

- Ignoring database locality and creating extra distance between the application and the data

- Overcomplicating microservices so that one request ends up going through too many internal services

- Releasing changes without performance validation and finding latency issues only after deployment

Good cloud latency optimization depends on avoiding these mistakes and making performance part of the enterprise cloud strategy.

Cloud Latency Optimization Requires Continuous DevOps Discipline

Cloud latency optimization is not something you fix once and forget. As your systems grow across regions, services, and user locations, new delays can appear at any time. That is why performance needs regular attention, not occasional fixes.

Enterprises usually improve latency by making smarter placement decisions, building stronger delivery architecture, maintaining clear observability, and treating performance as part of everyday operations. This is where DevOps performance engineering becomes valuable. It helps teams stay in control as systems change. For businesses focused on global application delivery, the message is clear: low latency is easier to sustain when it becomes part of how systems are built, tested, and run every day.

Related FAQs

1. How do you know whether latency is hurting enterprise systems?

Latency is likely hurting your enterprise systems if users are facing slow response times, delayed transactions, inconsistent performance across regions, or frequent complaints about application speed. It can also show up in internal systems through slower workflows, lower productivity, and weaker service reliability. If performance issues are starting to affect customer experience or business operations, latency is already becoming a bigger problem.

2. What makes cloud latency optimization sustainable over time?

Cloud latency optimization becomes sustainable when it is treated as an ongoing part of how systems are built, monitored, and improved. That means checking performance regularly, testing changes before release, and making sure teams do not wait for users to report issues first. It works best when performance stays part of everyday operations, not a one-time fix.

3. Should cloud latency optimization be handled as an infrastructure task or a business priority?

It should be treated as both, but the business side is just as important. Latency may start as a technical issue, but its impact is usually felt in customer experience, productivity, reliability, and revenue-related workflows. That is why enterprise leaders should see it as more than an infrastructure concern.

4. When should an enterprise treat latency as a strategic issue?

An enterprise should treat latency as a strategic issue when slow performance begins to affect customers, employees, or business-critical systems across multiple locations. If latency is creating friction in digital experiences or slowing important operations, it needs leadership attention, not just technical troubleshooting.

5. What to ask teams about cloud latency?

CXOs should ask where the biggest delays are happening, which users or regions are affected most, how performance is being measured, and whether latency is being tested before releases. They should also ask whether teams have clear visibility across the full system or are still guessing where the real problem is.

ABOUT THE AUTHOR

IPwithease is aimed at sharing knowledge across varied domains like Network, Security, Virtualization, Software, Wireless, etc.