What is a proxy in web scraping?

Before you set up a proxy network, it is important to understand what a proxy is and how it can help with web scraping. Once you know what it is, it will be obvious how it can help avoid blocks.

The Internet Protocol (IP) address is a unique number that identifies each computer connected to the Internet. It can reveal your geographic location and Internet service provider, which is why some over-the-top content providers can block certain content based on geographic location.

A proxy is a service that allows people to anonymize their IP address and access the Internet anonymously. When using a proxy, the website you are visiting sees only the IP address of the proxy server, not your personal IP address. This makes it harder for websites to track you while you conduct sensitive data searches.

Why is a proxy server used?

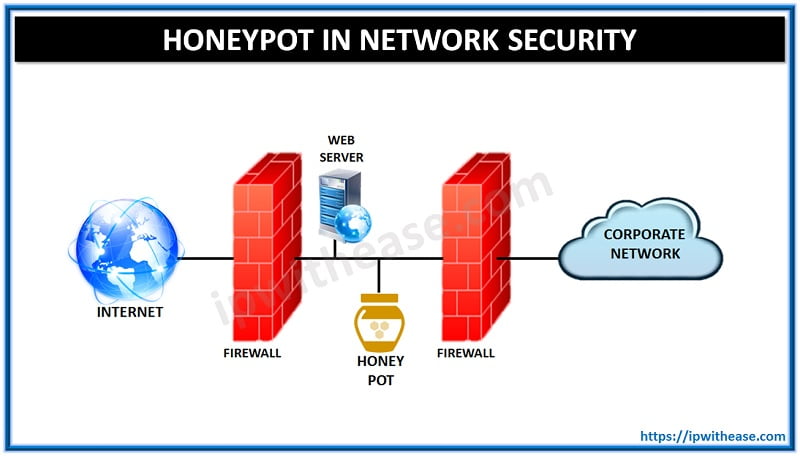

A proxy server is used to allow users on a network, as well as other networks, to access web content that may be blocked by their Internet service provider (ISP), such as certain websites, file downloads or streaming videos. A proxy server acts as an intermediary between the client and the website servers, handling the requests and responses between them. A proxy server can also be used to increase security on the company’s network.

Why do you need proxies for web scraping?

Why has proxy been embraced as a buzzword in web scraping? Scraping large amounts of data from a protected website can be time-consuming and difficult especially if you are not using a specialised data extraction or web scraping tool. The HTTP/HTTPS requests sent to the webserver may get blocked for various reasons, such as running out of space on your hard drive or failing to connect to the server because of firewall settings.

The most common reasons for these blocks are:

IP Geolocation:

If the website detects that you are trying to scrape content not available in your region or that you are a bot, it may deny you access. If you really need that data for market research or understanding how a new product feature is working, you might be out of luck!

IP rate limitation:

Website owners limit the number of requests they allow from any single IP address. When you reach that limit, you will get an error message and might even have to solve a CAPTCHA to continue processing your request. So before sending thousands of requests to scrape an e-commerce website for your next price prediction campaign, be sure to check with the site’s owner about how many requests per IP address are allowed.

So, What’s the best solution?

One way to avoid being blocked by an Internet server is to use a pool of proxies. By sending requests through different IP addresses, no one knows you’re scraping the site, so it’s impossible for the server to block you. Proxies are also important because they help make your scraper faster and more efficient.

How safe is a proxy server?

Proxy servers are legal to use, but you must be careful when using them. As long as your scraping logic adheres to website instructions, robots.txt, and sitemaps, you will be fine. It is important to follow best practices in web scraping and stay respectful of the websites you are scraping. Using a robots.txt generator can help ensure that your scraping activities are compliant with the rules set by each website, preventing potential issues and maintaining ethical standards.

Proxies are used to access information on the Internet. By using a proxy, it is possible to hide your computer’s true identity and access pages that would otherwise be unavailable to you. Depending on the website you are trying to scrape, you can select from a wide range of proxies such as data center proxies and residential proxies.

Alternatively, a proxy management service can help you streamline your data collection and reduce the effort required by web scraping. I would highly recommend this if you are looking to scale your web scraping efforts.

Continue Reading:

What Is the Best Programming Language for Web Scraping?

5 Ways in Which Your Business Can Benefit from Web Scraping

ABOUT THE AUTHOR

IPwithease is aimed at sharing knowledge across varied domains like Network, Security, Virtualization, Software, Wireless, etc.